What comes to your mind when you hear “Meta AI”?

Trash???

Well, you’re not alone. Things haven’t been going well for Meta. The Llama series is no longer leading, and you won’t see Meta AI in the top frontier AI lists anymore.

But here’s the thing, we’re still in the research phase of AI. And like other labs, Meta is still strong at research.

One area they are focusing on is the human brain. And that’s not easy to understand. I’m not just talking about psychology, but how the brain reacts to things and how neurons change. That’s what makes neuroscience such a difficult field.

Recently, Meta released a research project called TRIBE v2, and they claim it could be something big in neuroscience.

So if you’re into AI and research, this article will be worth your time.

What is TRIBE v2?

TRIBE v2 is a “foundation model” for the human brain. It is an AI that has been trained to predict exactly how a human brain will react when exposed to different things.

It is tri-modal. That means it takes in three types of data:

- Video

- Audio

- Language

The researchers at Meta fed this AI a huge amount of data. We are talking about over 1,000 hours of brain scans (fMRI data) across 720 different human subjects.

During these scans, those 720 people were doing highly natural things. They were watching movies like The Wolf of Wall Street, listening to podcasts, and reading stories.

They also used models like Video-JEPA and LLaMA to build this system. By learning from all this data, TRIBE v2 picked up patterns in how the human brain behaves.

For example, if you watch a video, read text, or listen to audio, the model can predict which specific cortical region of your brain will respond.

Meta has released this model as open source because they believe it can help advance neuroscience research. They also shared demos comparing real brain activity with the model’s predictions and honestly, the results are quite impressive.

Now, You might be wondering why predicting brain activity matters so much.

It matters because of what it allows us to do next.

Historically, if you wanted to test a theory about the brain, you needed real human beings. You needed funding, ethical approvals, and months of time just to gather data from a few dozen people.

TRIBE v2 introduces something called “in-silico” experimentation.

In simple terms: we can now run neuroscience experiments inside a computer, without needing a physical human brain.

Because TRIBE v2 is so accurate, researchers can simply feed the AI a video or a sound and ask, “How would a human brain react to this?”

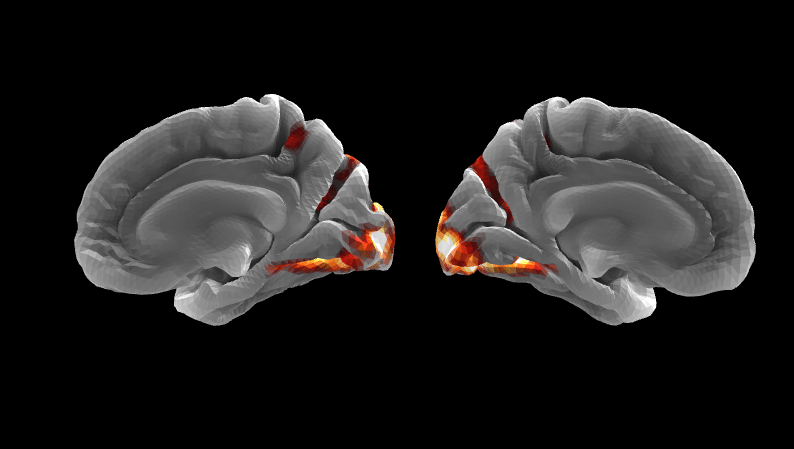

The model will output a high-resolution map of the brain, showing exactly which regions would light up.

To prove this actually works, the Meta researchers went a step ahead. They took classic, well-known neuroscience experiments from the past and ran them entirely through TRIBE v2.

For example, we already know from decades of research that a specific part of the brain (the Fusiform Face Area) lights up when we see human faces.

So now, when researchers show an image of a face to the AI, the exact same digital brain region lights up.

They tested it with places, body parts, written words, and spoken sentences. Every single time, the AI accurately predicted the correct brain response. It perfectly recovered classic neuroscience findings without using a single human test subject.

Image from META research

It Can Generalize to New Minds

Every human brain is a little different. The way your brain processes a podcast might not be exactly the same as mine. That’s why we can have different opinions about the same thing.

Normally, AI models struggle with this. If they are trained on Subject A, they are terrible at predicting Subject B.

TRIBE v2 solved this. It can perform “zero-shot” generalization.

That means you can take a brand new person.. someone the AI has never seen before.. and the AI can accurately predict their group-averaged brain response to a new stimulus.

In fact, the paper claimed that TRIBE v2 is actually better at predicting the general human response to a video than the brain scan of a single individual human is.

And if researchers want to make the model highly specific to you?

They only need about an hour of your brain scan data to “fine-tune” the model. Once they do that, the AI basically becomes a digital twin of your specific brain’s functional mapping.

Also, One of the most beautiful insights from this paper is how it proves the brain is connected.

When the researchers tested the AI on just one modality.. like only feeding it text, or only feeding it audio.. the model performed okay.

Text data helped predict the language centers of the brain. Video data helped predict the visual cortex.

But when they gave the AI all three modalities at once, the predictive power skyrocketed.

They found specific areas of the brain, like the temporal-parietal-occipital junction, where the AI’s accuracy jumped by 50%.

This proves that these regions are highly specialized for “multisensory integration.” They don’t just process sound or sight. They process the connection between them.

By looking at the AI’s internal structure, researchers could actually map out how our brains blend different senses together to understand the world.

My Take

Now, whatever you’re about to read is just my personal opinion. You can disagree with it, and I’m open to thinking about it from a different perspective.

So basically, we are dealing with two things here: first, this AI model, and second, what happens if we combine this model with Meta.

But first, let’s talk about the AI model.

Right now, we have an AI model that can quite accurately simulate how a human brain reacts to the world.

However, the researchers also pointed out some limitations. The model relies on fMRI data, which tracks blood flow in the brain. And blood flow is slow, it takes a few seconds. So the model still cannot capture the split-second activity of individual neurons.

It also doesn’t include active behavior. At the moment, it only models a brain that is passively watching or listening.

But as we scale, this could improve with bigger and better models. And here, we’re talking about something much bigger, potentially bridging the gap between artificial intelligence and human cognition.

Now, I know Meta has open-sourced this project. And I don’t have any personal hate toward Meta, but their track record isn’t exactly clean. They’ve been accused multiple times of data-related issues. Recently, both Meta and Google were even fined for designing addictive products.

Personally, I see Meta as an advertising company. Earlier, showing ads or content to people depended a lot on A/B testing and human focus groups.

But now, if you feed an AI with text, sound, and video, it can predict whether a person’s emotional or attention systems will be triggered or not.

And if you think about it, since Meta designs the algorithms, this could turn into a worst-case scenario for social media addiction.

Let me know what you think. Do you believe we’ll eventually create a complete working digital copy of the human brain?

And how do you think this will impact the future?

Every headline satisfies an opinion. Except ours.

Remember when the news was about what happened, not how to feel about it? 1440's Daily Digest is bringing that back. Every morning, they sift through 100+ sources to deliver a concise, unbiased briefing — no pundits, no paywalls, no politics. Just the facts, all in five minutes. For free.

/