Your computer has a CPU for thinking. RAM for remembering. A screen for showing you things. A keyboard and mouse for listening to you.

Four separate systems. Engineered independently. Bolted together. And it’s working nicely for the past 50-70 years, serving the purpose.

Until now.

Meta AI and KAUST just published a paper proposing something I genuinely haven't seen before.

They want the neural network itself to become the computer.

I am not talking about an agent that sits on top of your laptop and clicks buttons for you. The AI itself.. being the machine. Its internal state carrying the computation. Its weights acting as memory. Its generated pixels serving as the interface.

Just one set of learned weights doing everything. They're calling it Neural Computers.

I wanna talk about this today. Not because the results are incredible. They're actually kind of bad in some places, and the researchers themselves say so. But because the way they're framing this problem is unlike anything in the current AI conversation. And I think it's worth your time.

So here's where we are right now.

When people talk about AI and computers, they're talking about agents. Claude's Computer Use. OpenAI's CUA. These systems sit on top of a regular computer and drive it. They see the screen, move the cursor, type stuff.

But the actual work? That's still the traditional machine underneath.

The AI is the driver. The car is still a car.

This paper asks.. what if you got rid of the car entirely?

What if the model's latent state.. its hidden layers, its representations.. what if that was the computation, the memory, and the interface, all at once? One unified runtime. Not layers on top of layers.

That's the idea.

And honestly, it sounds insane. The researchers know it sounds insane. They're not pretending they've built a laptop replacement. They explicitly say these are early, rough prototypes.

But they did build something. And how they built it is where this gets interesting.

They used video models.

Think about it for a second. Your computer screen is just a sequence of images. You type a command, the screen updates. You click a button, a window opens. One frame leads to the next, driven by your actions.

So if you train a video model to predict what the next frame should look like based on what you just did.. you've got something that behaves like a computer.

Without actually running one.

No code executes. No OS boots. The model just generates what the screen should show next.

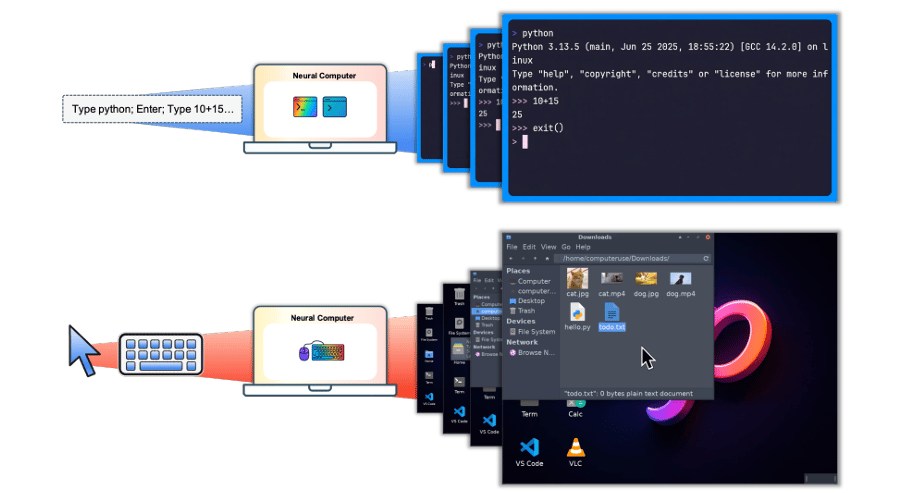

They built two versions. CLIGen for terminal interfaces. GUIWorld for graphical desktops.

For CLIGen, they fine-tuned Wan2.1.. a video generation model.. on about 824,000 clips from real public terminal sessions. Plus 128,000 scripted traces they created in Docker containers.

You give it a prompt like "Type python; Enter; Type 10+15; Enter" and the first frame of a blank terminal. It generates the rest of the session as a video.

For GUIWorld, they recorded desktop interactions on Ubuntu. Mouse movements, clicks, keystrokes. All synced with screen captures at 15 FPS.

They had about 1,400 hours of random interaction data. Cursors moving aimlessly, random clicks, noise.

And they had just 110 hours of purposeful data. Collected using Claude's Computer Use Agent doing real tasks.

The 110 hours destroyed the 1,400 hours.

Every single metric. The FVD score dropped from 48.17 to 14.72. Structural similarity jumped. Perceptual distance shrank.

Just process that for a second.

110 hours of intentional data beating 1,400 hours of noise. It's not even close. Quality isn't slightly better than quantity here. It's a completely different game.

Every headline satisfies an opinion. Except ours.

Remember when the news was about what happened, not how to feel about it? 1440's Daily Digest is bringing that back. Every morning, they sift through 100+ sources to deliver a concise, unbiased briefing — no pundits, no paywalls, no politics. Just the facts, all in five minutes. For free.

The Honest Part

The researchers did something I genuinely respect. They didn't hide the bad results.

On the terminal side, the Neural Computer learned to render text well. At 13px fonts, reconstruction was strong.. 40.77 dB PSNR, 0.989 SSIM.

It generated readable terminal frames. Syntax highlighting. Color codes. Proper cursor placement.

Character accuracy reached 0.54 after 60k training steps. Exact line matches hit 0.31.

For a system painting pixels from scratch.. not executing any actual code.. that's real.

But then they tested arithmetic.

Can it display the correct answer to 10+15?

Their model got 4%.

Wan2.1 got 0%. Veo 3.1 got 2%. Sora 2 hit 71%, but the researchers suspect that's system-level tricks, not native math ability.

Four percent. On addition.

Then they tried something clever. They fed the correct answer into the prompt. "The answer is 25."

Accuracy jumped from 4% to 83%.

And here's where the paper earns my respect.

They explicitly say this isn't the model doing math. It's rendering what you told it to render. It can show you 25 on screen if you hand it the answer. But it can't figure out that 10+15 equals 25 on its own.

Their own words.. "strong renderers and conditionable interfaces, not native reasoners."

The difference between a calculator and a really good whiteboard that draws whatever you dictate.

In a space where every lab dresses up results as breakthroughs, publishing 4% accuracy on addition takes guts. That's honesty.

The desktop side has a different story though. And this one is beautiful.

They needed the model to track the cursor. So they fed it raw x,y coordinates.

8.7% accuracy.

They encoded those coordinates with Fourier features.

13.5%.

Then they changed the approach entirely. Instead of abstract numbers, they rendered the cursor as a visible SVG arrow directly on the screen frames.

98.7%.

Same model. Same data. Just a different way of showing the cursor to the system.

When the model could see the cursor as a visual object instead of a pair of numbers.. it learned cursor dynamics almost perfectly.

That's not a tweak. That's a 90-point jump from changing how you represent the problem.

How you frame something for a model can matter more than how much data you throw at it. That insight goes way beyond this paper.

They also tested four ways to inject user actions into the transformer. External conditioning, contextual tokens, residual updates, internal cross-attention.

The deeper the actions went into the model's core, the better it responded to clicks and keystrokes.

Shallow integration doesn't cut it. The model needs to deeply fuse what you're doing with what the screen should show next.

My Take

The paper lays out a roadmap toward what they call a Completely Neural Computer. Turing complete. Universally programmable. Behavior-consistent. They're honest that none of it is close. Models can't grow memory on the fly. They forget between sessions. There's no separation between using the system and modifying it.

But they argue that the programming language of a Neural Computer is whatever the model learned from data. Prompts are programs. Demonstrations are specifications. Every keystroke, mouse movement, and screen change ever logged is potential training data for this kind of system.

That data dwarfs every codebase ever written. By orders of magnitude.

Am I saying this replaces your laptop? No. A system that can't add isn't replacing anything.

But the question matters. Do we keep stacking smarter layers on top of the same 70-year-old architecture.. or is there a future where the machine itself is learned?

Someone drew the map. We'll see who walks it.

What's your definition of a computer? Does it have to run code on silicon, or could a learned system count?

If you made it this far, you're not a casual reader. You actually think about this stuff.

So here's my ask. If this article made you think, even a little, share it with one person. Just one. Someone who's in the AI space. Someone who reads. Someone who would actually sit with these ideas instead of scrolling past them.

That's how this newsletter grows. Not through ads or algorithms. Through you sending it to someone and saying "read this."