OpenAI dropped GPT-5.5 a few hours ago. And you’d expect it fits perfectly into the usual AI launch cycle of big labs. You can try it for free in Codex, so go try it and share your thoughts. By the way, in this post I’m going to talk about research from MIT.

GPT-5 starts breaking around 272,000 tokens. If you feed it more, it begins to hallucinate dates, forget the start of your document, and confidently make things up that aren’t there.

But three MIT researchers fed it 10 million tokens and it kept going.

And no, they didn’t tweak the model’s weights or use a new architecture. They simply wrapped GPT-5 in a Python loop and made it talk to itself.

The paper is called "Recursive Language Models." And if you've been reading me for a while, you know why I'm writing about it today.

A few weeks back, I said AGI needed breakthroughs in two specific areas. Continual learning and context. I said context wasn't really a size problem, it was an algorithmic one. That we needed a way to process a million pages as effortlessly as a single paragraph.

This paper might be the first real attempt at that.

What context rot actually feels like

Every AI you've used has a ceiling. ChatGPT, Claude, Gemini, whatever. They all have a number. A maximum amount of stuff they can hold in their head at once. That's the context window.

And inside that window, the quality degrades.

It has a name. Context rot. You paste 50 pages in. The model handles it okay. You paste 500 pages in. It starts confusing things. You paste 5,000 pages in.. it falls apart entirely. Not because it ran out of space, but because the longer the context, the worse it thinks.

This is why every agentic AI breaks after a while. Why coding agents lose track of what you were doing three hours ago. Why your 100-page legal doc gets summarized wrong.

The model isn't stupid. It's drowning.

The industry has been trying to solve this for years. Longer windows. Better attention mechanisms. Summarization tricks that compress old context. Retrieval systems that fetch the "relevant" pieces. All of it is basically just pushing the wall further out or quietly deleting parts of the prompt and hoping you don't notice.

What the nerds at MIT did differently is that they didn’t try to push the wall. They challenged the idea of the wall itself.

Your browser should think and act. Norton Neo does.

Right now, getting answers online means juggling tabs, copying text into a separate AI tool, losing your place, and starting over. Norton Neo is the first safe AI-native browser built by Norton, and it cuts all of that out. Hover any link for an instant summary without opening a new tab. Search every tab, chat, and bookmark from one place. Write with AI built right into whatever page you're on.

No external tools. No broken flow. Every action protected by built-in VPN and ad blocking, all running quietly in the background while you work.

Fast. Safe. Intelligent. That's Neo.

What they actually did

Don't feed the long prompt into the model. Don't feed it at all.

Instead, drop the prompt into a Python environment as a variable. And give the model access to that environment. Now the model can write code. It can peek at chunks. It can split things up. It can call itself on those chunks. It can store answers in variables. It can stitch them back together.

The model isn't trying to hold 10 million tokens in its head. It's writing programs that operate on 10 million tokens.

The way you would, if someone handed you a 1,000-page document and asked you a question about it. You wouldn't try to remember every word. You'd flip to the index. Skim the chapters. Take notes. Compare sections. Forget the parts that don't matter.

That's what this does. But the notes, the skimming, the comparing.. that's all the model calling smaller versions of itself on smaller pieces.

The authors include examples of what these trajectories actually look like. In one of them, GPT-5 is handed a 1,000-document corpus and asked a multi-hop question about a festival winner. It writes a regex. Scans for keywords like "festival" and "pageant." Finds a relevant chunk. Calls a sub-model on just that chunk. Gets an answer. Double checks it. Returns "Maria Dalmacio." Correct.

The whole thing cost 8 cents.

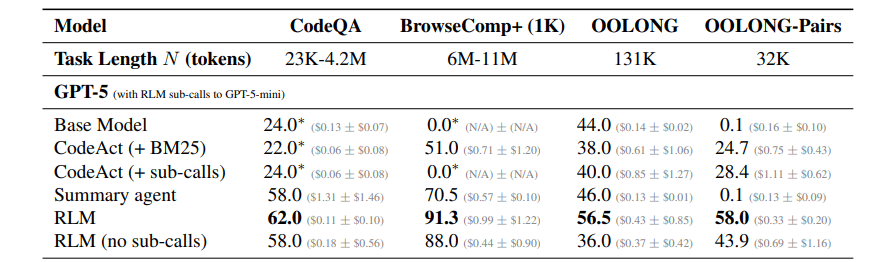

And the results scale in ways that should genuinely surprise you. On tasks that require reasoning across millions of tokens, GPT-5 scores near zero. The RLM version scores 91%. On an information-dense pairs task where base GPT-5 gets 0.1%, the RLM version gets 58%.

TBH. This is HUGE.

What this actually means

This isn't a new model. Which means it works with whatever you point it at. GPT-5, Claude, Qwen, Gemini. Nothing gets retrained. You're building on top of what already exists.

For two years, the story has been: if you want your AI to handle longer inputs, wait for the next model. Bigger parameters. More training. New architecture. Be patient. It's coming.

This paper says no. The models we already have can handle orders of magnitude more context. Today. You just have to stop feeding the whole prompt into them.

The researchers also took a tiny 8B open-source model. Fine-tuned it on 1,000 examples to work natively as an RLM. That single weekend of training pushed its long context performance up by 28.3%.

Small open models have been bad at long context forever. That's just been the story. A thousand examples might have quietly changed the story.

The paper has real limitations. The runtime is slow without async. Smaller models without coding skills struggle. There's a strange failure case, where the model builds the correct answer in a variable, verifies it, then ignores its own work and generates a wrong one from scratch. The authors flag it honestly.

But clean ideas in AI tend to get better fast. And this idea is very clean.

When I wrote about the gentle singularity, I said context was one of two walls standing between us and AGI. I was picturing something exotic. New attention mechanisms. Years of research. Some lab breakthrough.

Not a Python REPL. Not a scaffold you could prototype in an afternoon.

So, If a wall this fundamental can fall to an idea this simple.. what else have we been assuming is hard because nobody tried the obvious thing first?

Coding is almost solved. Context is starting to bend. The last wall is continual learning. Models that actually change from what you tell them, without being retrained.

Let me know what you think. If a model can recursively query itself to handle any context length.. is that intelligence? Or is it clever plumbing around a model that still fundamentally can't think?

If you made it this far, you're not a casual reader. You actually think about this stuff.

So here's my ask. If this article made you think, even a little, share it with one person. Just one. Someone who's in the AI space. Someone who reads. Someone who would actually sit with these ideas instead of scrolling past them.

That's how this newsletter grows. Not through ads or algorithms. Through you sending it to someone and saying "read this."

Research Paper: Recursive Language Models