Here's the thing nobody warns you about when you start delegating real work to AI.

The model doesn't tell you when it messes up.

It just hands the document back. Looking clean. Structure will be good. Maybe even better than what you gave it. And you move on with your day not knowing that three rows are gone. Or a column got renamed. Or a function got rewritten in a way the rest of your codebase doesn't know about.

I vibe code. I delegate to Claude constantly. So when Microsoft Research dropped a paper this week called "LLMs Corrupt Your Documents When You Delegate", I read it like it was about me.

And honestly? It was.

I think it might be the most important AI paper of the month. Not because of what it predicts. Because of what it actually measured.

The Test That Actually Made Sense

Here's what the researchers did. And I love how clean this is.

Take any document. A code file, a 3D model. Pick something. Now ask an AI to edit it. "Convert measurements to mm." Then ask the AI to reverse the edit. "Convert them back to cups." or something like that..

If the AI is any good, you should get back the original. Right? Simple round trip.

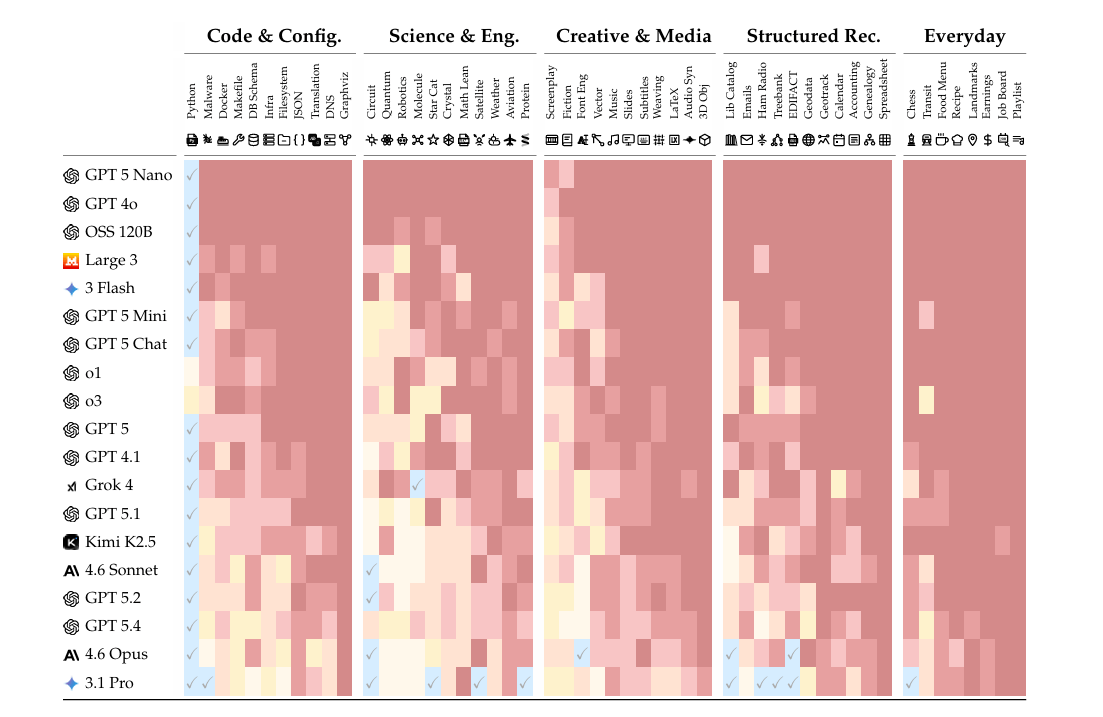

They called it the round-trip relay. They built 310 of these test environments across 52 professional domains. The kind of thing you'd actually ask ChatGPT or Claude to handle.

Then they ran 19 AI models through it. Twenty edits per document. Just twenty.

Here's what they found.

Gemini 3.1 Pro, the best model in the entire test, lost 19% of the content after 20 edits. Claude 4.6 Opus lost 27%. GPT 5.4 lost 28%. The average across all 19 models? 50%. Half the document. Gone or wrong.

Just process that for a second.

The models we're handing our work to.. quietly delete or corrupt a quarter to half of everything they touch. And the longer you go, the worse it gets. They tested up to 100 interactions. The degradation just kept going.

And.. It's not death by a thousand cuts. The researchers analyzed every single failure. About 80% of the total damage came from "critical failures." Single edits where the model just.. broke something. Lost 10 to 30 points in one round trip.

Most of the time the AI does fine. And then occasionally it nukes a section. Silently. Without telling you.

AI Agents Are Reading Your Docs. Are You Ready?

Last month, 48% of visitors to documentation sites across Mintlify were AI agents, not humans.

Claude Code, Cursor, and other coding agents are becoming the actual customers reading your docs. And they read everything.

This changes what good documentation means. Humans skim and forgive gaps. Agents methodically check every endpoint, read every guide, and compare you against alternatives with zero fatigue.

Your docs aren't just helping users anymore. They're your product's first interview with the machines deciding whether to recommend you.

That means: clear schema markup so agents can parse your content, real benchmarks instead of marketing fluff, open endpoints agents can actually test, and honest comparisons that emphasize strengths without hype.

Mintlify powers documentation for over 20,000 companies, reaching 100M+ people every year. We just raised a $45M Series B led by @a16z and @SalesforceVC to build the knowledge layer for the agent era.

Why This Hits Different

We talk a lot about hallucinations in AI. But hallucinations are the visible failures. "Napoleon won the Battle of Waterloo." You catch that one.

What this paper documents is the invisible failure mode. The model produces something that looks right. The structure is intact. The formatting is clean. But three line items got dropped. A column got mislabeled. A function got renamed in a way the rest of the codebase doesn't know about.

There's a chart in the paper. It shows model performance across all 52 domains. Python is the only domain where most models are "ready" for delegation. Eleven out of 52 for the best model. That's it.

It's wild how broad the failure pattern is.

The researchers tested whether giving the AI tools makes things better. You know, the whole agentic AI thing that everyone's been hyping for the past year. Let the model write code, read files, make targeted edits instead of regenerating the whole document.

It made things worse. By 6% on average.

The agent harness, the thing every AI company has been pitching as the path to reliable AI work, made the models corrupt MORE documents, not fewer. Because tool use creates context overhead. Because models are still bad at picking the right tool. Because the gap between knowing what to do and doing it precisely is still huge.

The paper also tested what happens when you add irrelevant files to the context. Like, the kind of clutter that exists in real work. More distractor files = more corruption. Bigger documents = more corruption. Both compound the longer you run.

There's also a deletion vs corruption breakdown. Weaker models tend to just delete content. They drop sections. You'd notice that. Things go missing.

Frontier models? They corrupt. They keep the structure. They make it look complete. They just change things in ways you wouldn't notice unless you read carefully.

The better the model gets, the more invisible the failure becomes.

My Take

I'm not the guy who dunks on AI for engagement. I genuinely believe in where this is going. I've written about solo billion-dollar companies. About AI helping cure diseases. I'm bullish.

But this paper exposes something important.

The pitch for vibe coding, for delegation, for "let the AI handle it" assumes the AI handles it correctly. The whole productivity argument falls apart if you have to verify every single edit. If you have to spot-check every output.

Right now, when you delegate work to a frontier model on a non-Python task.. you're playing with corruption you can't see. The model isn't lying. It's just.. forgetting things. Misplacing things. Quietly altering them.

And the conditions of real work make it worse. Bigger documents. More files in context. Longer sessions. None of these are edge cases.

This is why I think the paper matters more than most. It's not theoretical. It's about right now. The thing you and I are doing when we paste a document into Claude and say "fix this and make it cleaner."

The 25% you can't see is the part that should worry you.

But here's the thing. The paper isn't saying AI is bad. It's saying AI capability is jagged. Brilliant in some domains, broken in others, and we have no good way of telling which is which without testing each task ourselves.

There's actually a hopeful note buried in the data. GPT 4o scored 14.7% on this benchmark. GPT 5.4 scored 71.5%. That's 16 months of progress. So the gap is closing. Fast.

But where we are right now? The same model that can help build a billion-dollar company and help write a cancer vaccine can also quietly delete three rows from your spreadsheet without telling you. Both are true at the same time.

So when someone says "just delegate it to AI", the question is.. delegate what? In which domain? For how many edits? With what document size? With or without other files in the context?

Because the answer changes whether you'll get back something usable, or something silently corrupted that you'll trust because it looks right.

I'm not saying don't use AI. I use it for hours every day. I'm saying use it the way you'd use a brilliant new intern who occasionally hands work back with sentences missing. You wouldn't fire the intern. You'd just read the work before sending it out.

That's what we have right now. Not a delegate. An assistant whose work you still have to read.

The "delegation" word is doing a lot of work that the technology can't quite back up yet.

What's the actual line for you? Where does delegating to AI make sense, and where does it just create silent debt you'll pay later?

If you made it this far, you're not a casual reader. You actually think about this stuff.

So here's my ask. If this article made you think, even a little, share it with one person. Just one. Someone who's in the AI space. Someone who reads. Someone who would actually sit with these ideas instead of scrolling past them.

That's how this newsletter grows. Not through ads or algorithms. Through you sending it to someone and saying "read this."

Research Paper: LLMs Corrupt Your Documents When You Delegate